TETRIS AI WITH REINFORCEMENT LEARNING

Today, artificial intelligence can be found almost everywhere, be it in image recognition, in parts of the car or in graphics cards. There are many different ways to implement artificial intelligence, and depending on the application, they work better or worse. To make it easier to imagine the principle of an AI, descriptive examples such as the self-learning of games like Tetris are sufficient. That's why we decided as a team to develop an AI that teaches itself exactly this - the Tetris game.

For this we used reinforcement learning, a mechanic in which the AI is thrown in at the deep end without any prior knowledge. By recognizing a "good move", the AI gets a reward. How good a move was is determined by the so-called reward function. Through this system, the AI slowly learns which moves are "clever" and which make no sense.

To implement our project, we used the programming language Python and the associated library Tensorflow. To realise the game itself, we used the Pygame library, as this enables a simple implementation of Tetris.

Designing the network using tensorflow

In the first step, we had to think about how to design our neural network. How many inputs do we need, how many layers, how many outputs?

After many experiments, we decided on a network that contained 400 neurons in the first layer. This was chosen because it had to contain the playing field twice, once with the actual playing field, once with only the falling block. This was designed so that the network could learn which neurons represented the falling block, based on the princip of one hot encoding. Then the number of neurons for the second layer was halved, and for the third layer, based on the second, also halved. The last layer contained five neurons. This was designed as the five neurons representing the five possible actions. These five actions were: Move left, move right, rotate the block, drop the block faster or place it directly.

q_net = Sequential()

q_net.add(Flatten())

q_net.add(Dense(400, activation='relu'))

q_net.add(Dense(200, activation='relu'))

q_net.add(Dense(100, activation='relu'))

q_net.add(Dense(5, activation='linear'))

q_net.compile(optimizer=tf.optimizers.Adam(learning_rate=0.001), loss='mse')Building this network with Tensorflow is quite simple, as it requires very little code. First, it is defined that the layers of the neural network are traversed sequentially, then the incoming data are truncated to one dimension. This is followed by the fully connected layers already defined above, which are using the Rectified Linear Unit (ReLU) activation function. The last layer has a linear activation function. Then, to produce the final network, it is compiled using the Adam optimizer.

Reinforcement Learning using Deep Q-Learning

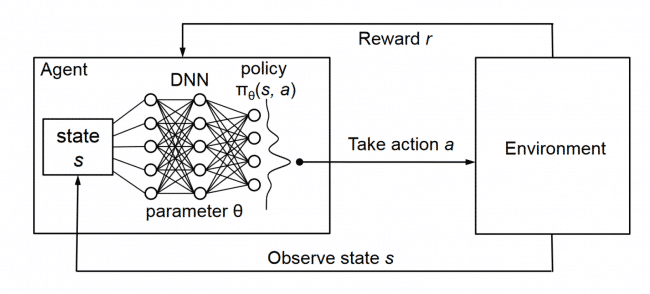

In reinforcement learning, the neural network we created above is embedded in an agent. The agent moves in an environment (in this case Tetris) and observes it. Based on its observations, the agent decides on its next action by feeding the observation (also called state) into the neural network. The best evaluated action is executed. For the executed action, the agent receives a reward, depending on how well this action matches the current state.

For our agent we used a version of double Q-learning. Through this, our agent gained a replay memory, in which the last N states, actions and their rewards were stored. Each iteration a few tuples were sampled from that memory to train the network. Another important part of double Q-learning is the second network that is introduced to our agent. This network, called the 'target network', is used to estimate the maximum reward that could be gained in the future, when the agent chooses a given action. This network is architecturally equivalent to the first network and is updated regularly using the trained first network.

Reward function

To be able to use reinforcement learning, we had to come up with a reward function that would determine how much the AI would be rewarded for a certain move. We researched different approaches and decided on the following function.

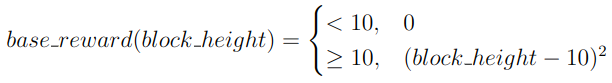

First off, if the current action is happening in the lower half of the field (field height being 20), the current action should receive a base reward depending on the height of the block. We used this to try to encourage the neural network to survive longer and keep the overall stack of blocks low. We called this reward 'base_reward'.

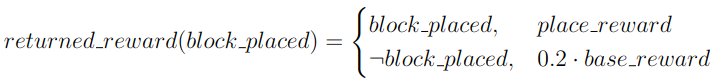

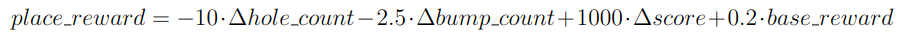

If the network managed to place a block down, this base reward was used in conjunction with other factors to calculate the overall reward for placing that block. If the current action did not place the block down, the base reward was returned.

The 'place_reward', as we called it, included the 4 following factors:

- The number of holes in the stack of blocks

- i.e. the number of cells that have blocks above them

- The overall bumpiness of the stack

- given as the sum of height differences of neighboring columns

- The game score

- The previously calculated base reward

The changes in these factors (after the network made it's move) would be weighted and summed to calculate the final reward.

Results

After our reward function and our neural network were designed and the first test runs seemed promising, we started the long-term training. Here we considered that it would make sense to deal with a "know-nothing" AI like a child learning to play the piano. You don't teach the child the complete music theory, how to read music and how to play with two hands at the same time, but little by little, when it has mastered a part of it. That's why we first let the AI play with a 2 by 2 block until it played the game almost perfectly. Then we added the four-by-one block and waited for the training to reach a passable playing style. We then added a T-shaped block to the pool of blocks. It turned out that the AI could not cope with the complexity of a pool with three blocks and learned very little. As the project time was coming to an end, we thought about which influences could improve the performance. We were not able to test these theses in this project and they will be taken up in future work.

Learnings & Tips

- Quick results are unlikely as the training can take a long time

- Expectations at the beginning should be reduced, as otherwise one gets into a "burnout" phase too quickly

- Hardware is important (even if remote servers are slower than the local machine, your own hardware will thank you)

- Parallelise! If you have several ideas for improvement and the hardware is sufficient, execute them all simultaneously!

- Optimise at an early stage

- Visualisation is nice, but don't overdo it, it destroys the performance in terms of the time it takes

The code for this project is available on GitHub