Clustering

As a part of the “Data Science” master course at the University of Applied Sciences Kaiserslautern in the winter semester of 2022/23, we investigated clustering, which is a type of unsupervised machine learning. In this article, we provide an overview of what clustering is and where to apply clustering, as well as present two popular clustering algorithms that we used in our R-notebooks.

The notebooks, the datasets, and a Wiki describing different kinds of clustering are available in our GitHub repository

What is Clustering?

Clustering is identifying previously unknown groups of data examples based on their similarity. The resulting clusters can be used to understand the structure of the data and identify patterns.

Common applications of clustering include:

- anomaly detection;

- data reduction;

- image segmentation;

- market segmentation;

- medical imaging;

- social network analysis, etc.

There are a lot of different clustering algorithms existing, and the choice of a clustering algorithm depends on the users' criteria and initial data. We have considered two of the most popular ones and created R notebooks to represent real-data examples of using these.

Hierarchical Clustering

Hierarchical clustering and dendrograms are powerful tools used to organize and analyze data in R.

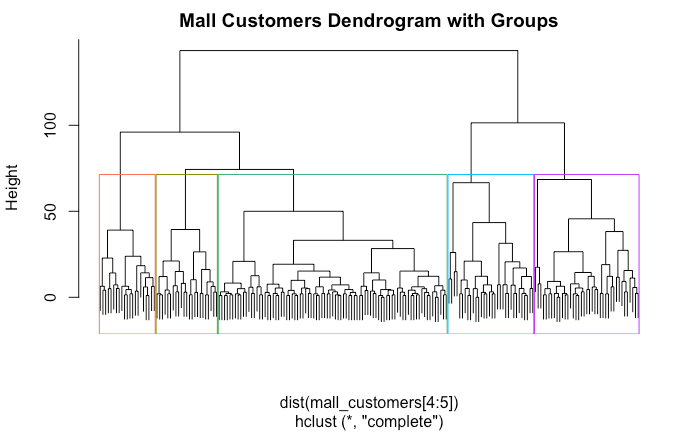

Dendrograms are graphical representations of hierarchical clustering that show the hierarchical relationships between the data points being clustered. They provide a way to quickly visualize the clustering results and compare different clusters. Using R, it is easy to create and interpret dendrograms to gain insights into the data.

In our notebook, we used the Mall Customers dataset as an example for hierarchical clustering. The dataset contains data about people's gender, age, annual income, and spending score.

To perform hierarchical clustering in R we will use the hclust function. There are several different linkage methods that can be used with hclust to determine how the objects are grouped, and these include complete, single, average, centroid, and ward.

- Complete linkage clustering works by calculating the maximum distance between any two points in each cluster and then combining the two clusters with the greatest distance;

- Single linkage clustering works by calculating the minimum distance between any two points in each cluster and then combining the two clusters with the smallest distance;

- Average linkage clustering works by calculating the average distance between any two points in each cluster and then combining the two clusters with the smallest average distance;

- Centroid linkage clustering works by calculating the distance between the centroids of two clusters and then combining the two clusters with the smallest centroid distance;

- Ward's linkage clustering works by measuring the increase in variance for every two clusters that are combined and then combining the two clusters that result in the smallest increase of variance.

For this blog post, we will go with the complete linkage method, since a comparison of other methods can be found in the notebook. Feel free to take a look at the notebook and see for yourself what impact using different linkage methods or more than two dimensions would have.

Results

When performing clustering there is always something you want to learn from data. With the mall customer dataset, we want to find out if we can find groups of similar customers with similar behavior. We only used the columns "Annual Income" and "Spending Score".

Running hclust in R requires only a few lines of code:

# Perform hierarchical clustering

clusters <- hclust(dist(mall_customers[4:5]))

# Plot dendrogram using ggdendrogram

ggdendrogram(clusters, main="Dendrogram", labels=FALSE)

Reading a dendrogram is pretty easy:

- A dendrogram is read from top to bottom, with each branch representing a split in the data set.

- The length of the branches represents the distance between the clusters, with longer branches indicating larger distances.

- The leaf nodes at the bottom of the dendrogram represent the individual data points that were clustered together.

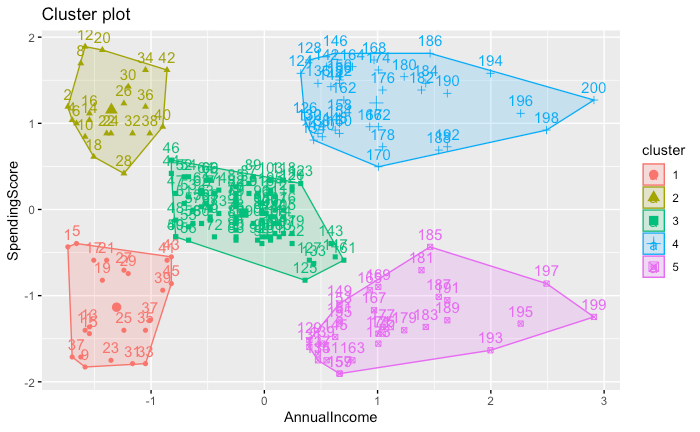

Now that we have the dendrogram and know that we want 5 clusters we can cut the tree at k = 5 and visualize our clusters:

# Set k

k = 5

# Cut Dendrogram into k clusters using cutree

clusters_cut <- cutree(clusters, k)

# Plot clusters

fviz_cluster(list(data = mall_customers[4:5], cluster=clusters_cut))

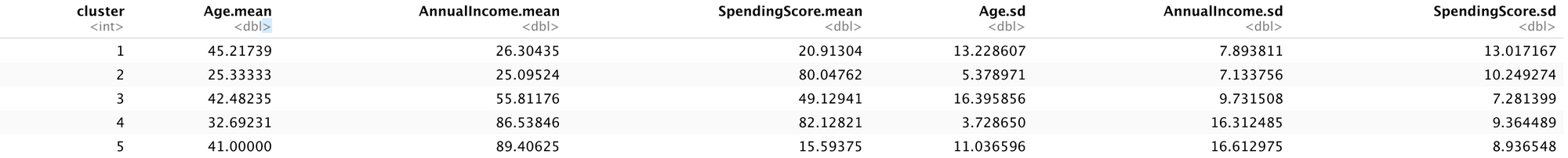

As we can see we receive cohesive clusters. If we want further information about those clusters we can aggregate their properties and compare them using a table:

# Bind results of clusters

results <- cbind(mall_customers[3:5], cluster=clusters_cut)

# Get mean values of each cluster using aggregate function

agg_mean <- aggregate(results[1:3], by=list(cluster=results$cluster), mean)

# Get standard deviation values of each cluster using aggregate function

agg_sd <- aggregate(results[1:3], by=list(cluster=results$cluster), sd)

# Merge resulting tables into one table

agg <- merge(agg_mean, agg_sd, by="cluster")

# Rename column names to useful names instead of .x and .y

names(agg) <- c("cluster", paste0(names(agg_mean)[-1], ".mean"), paste0(names(agg_sd)[-1], ".sd"))

# Plot the merged table with renamed columns

agg

The low standard deviation of the clusters implies that the data points are closely grouped together, resulting in cohesive clusters. Marketers can use this data to define the age, annual income, and spending score of a target group, as well as identify the largest and smallest customer groups. With this information, marketers can create more effective campaigns for e.g. customer acquisition.

K-means

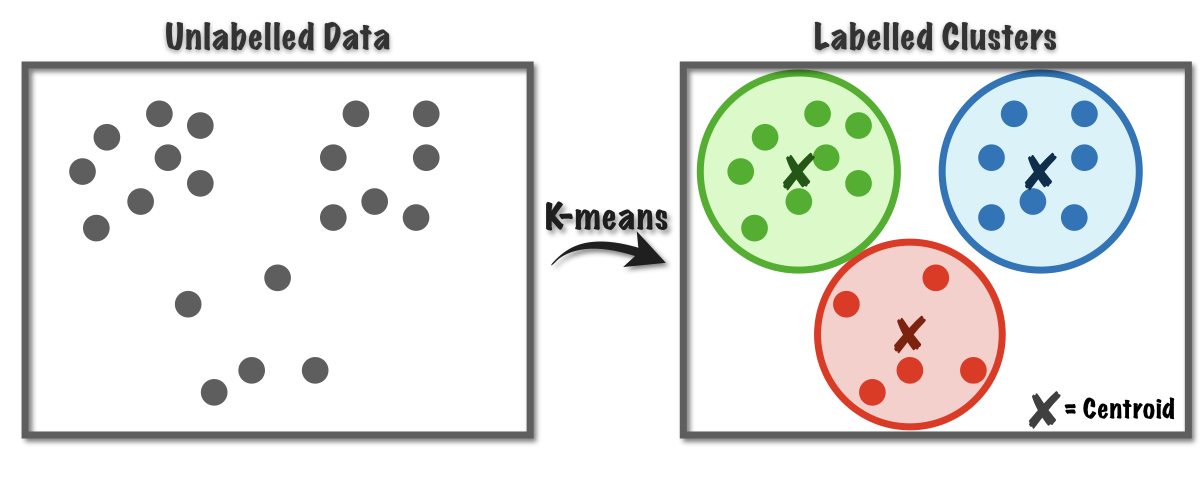

The next algorithm, that we considered is K-means. K-means algorithm is simple to implement, efficient, and very intuitive. We chose it because it is widely used and can be quickly applied to large real-life datasets due to its simplicity and speed.

The main point of it is to divide a group of data points into a specified number (k) of clusters, where each data point is assigned to the cluster with the closest mean value. The goal is to find clusters such that the data points within each cluster are as similar as possible to each other and as dissimilar as possible to data points in other clusters.

How it works

The algorithm consists of 5 steps:

1. Initialize k centroids (randomly or based on some heuristic).

2. Assign each data point to the nearest centroid, forming k clusters.

3. Calculate the mean of each cluster.

4. Reassign each data point to the nearest centroid using the updated means.

5. Repeat steps 3 and 4 until the assignments do not change or some other stopping criteria are met.

Pros and Cons

K-means has the following advantages:

+ Runs in linear time in most cases;

+ Straightforward to implement;

+ Deals well with large datasets (fast and scalable);

+ Adapts to new examples very frequently;

+ Guarantees convergence.

But as with any algorithm, it has some disadvantages as well:

- Centroids initialization sensitivity;

- The number of clusters needs to be chosen manually;

- Sensitive to outliers;

- Does not work very well with nonconvex shapes;

- Tries to generate equal-sized clusters;

- Scaling with the number of dimensions.

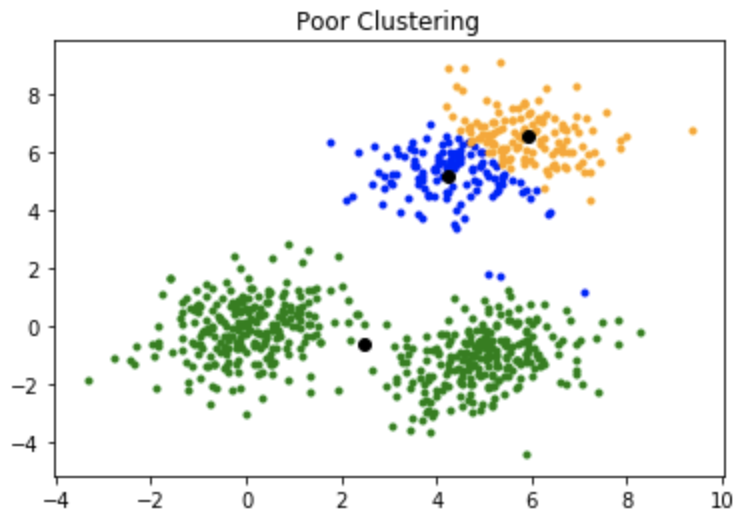

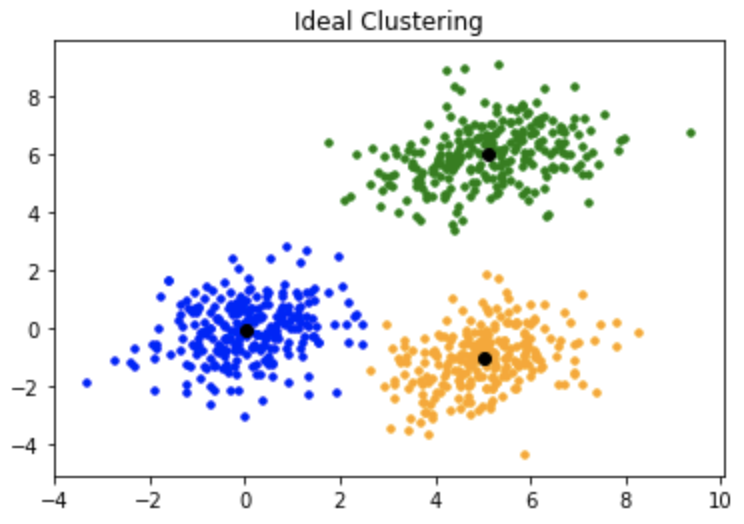

The results of k-means are easy to interpret and can be used for further analysis. By successful clustering, we will see well-defined, not-overlapping clusters.

Although, in real-world datasets, we often experience, that the clusters are close to each other and far from the ideal picture.

Results

For an example of using k-means, we performed the credit card customer segmentation. For that, we used the Credit Card Dataset for Clustering, which summarizes the usage behavior of 8950 active credit card holders during the last 6 months.

Those are the steps we did in order to perform the K-means clustering on the chosen dataset:

1. Data Exploration and Data Preparation. It is important to understand the underlying structure of the data and prepare it for applying the algorithm. This can include identifying missing values and outliers and scaling the variables to the same dimension. Unprepared data can lead to suboptimal clusters.

2. Feature Engineering. The k-means algorithm is sensitive to the number of variables. As the number of variables increases, it can lead to over-fitting and slow convergence. To reduce the dimensionality of the data, Principal Component Analysis (PCA) can be used. PCA projects the original dataset onto a new set of axes that account for the most variation in the data. These new axes (principal components) are linear combinations of the original variables that are ordered by the amount of variance they explain. By using only the first few principal components, you can retain most of the variation in the data while reducing the number of variables. PCA allows K-means to focus on the most important features of the data, reducing the influence of noise and irrelevant variables.

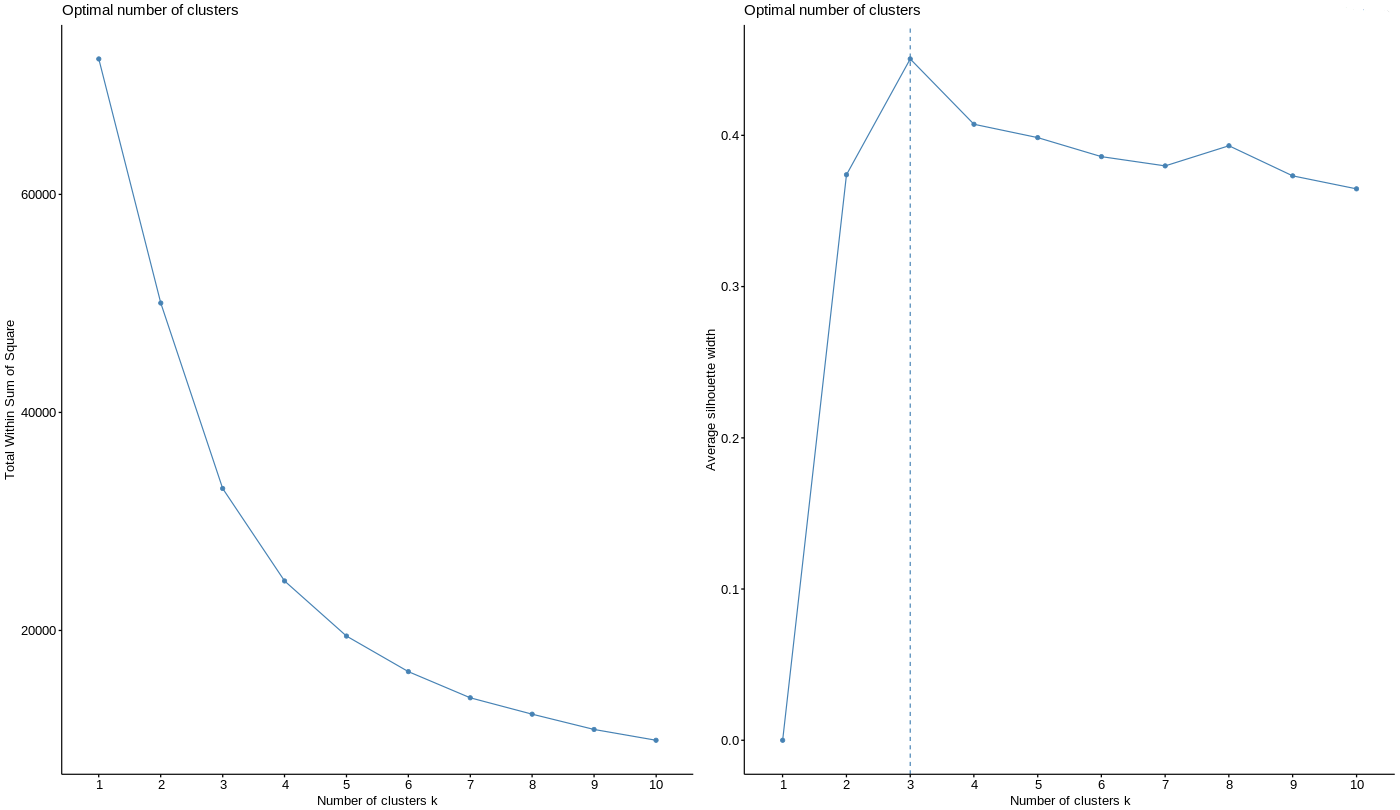

3. Choosing the k-value. The following methods can be used to find an optimal number of clusters:

Elbow method - looks at the within-cluster sum of squares (WCSS), and plots it as a function of the number of clusters. The idea behind the elbow method is that as the number of clusters increases, the WCSS decreases; however, at some point, the decrease in WCSS begins to slow down, forming an "elbow" shape when plotted on a graph. This point is considered the optimal number of clusters. Sometimes defining the elbow point is, however, not easy, as the WCSS can keep changing the speed at which it decreases at several points.

Silhouette method - looks at the similarity of each point to the points in its own cluster compared to the next closest cluster. It then plots the silhouette coefficient for each point, where a coefficient close to 1 indicates that the point is well-matched to its own cluster and clusters are easily distinguishable, and a coefficient close to -1 indicates that the point is mismatched to its own cluster. The optimal number of clusters is chosen as the number of clusters that result in the highest overall silhouette coefficient. The silhouette method is considered more robust and is less sensitive to the initial conditions.

We tried both methods and decided to rely on the silhouette method and choose the k-value equal to 3:

4. Performing clustering. Running K-means algorithm in R:

# Set seed

set.seed(1234)

# Run K-means

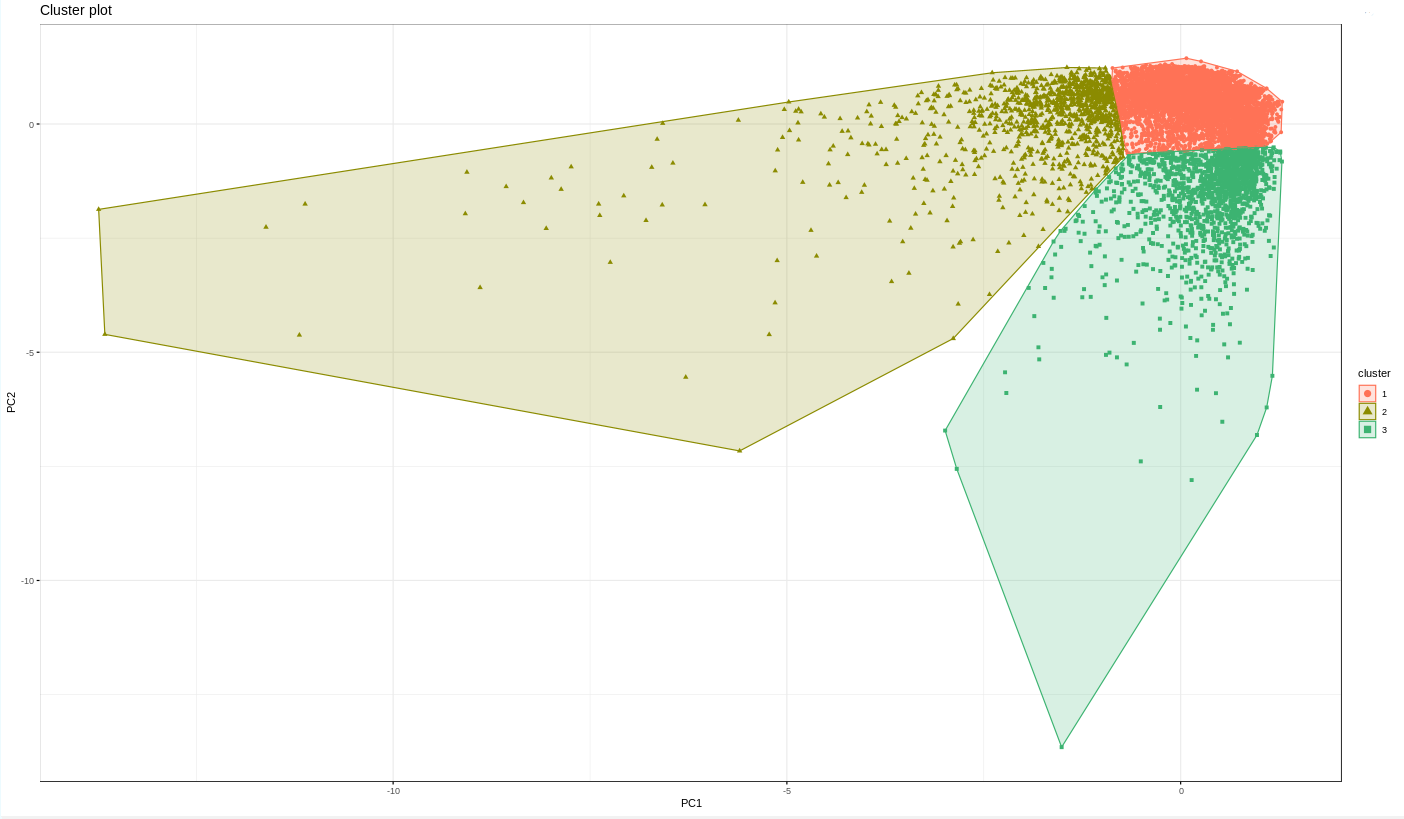

pca_cards_clust <- kmeans(pca_cards, centers = 3)The clusters are represented on a 2D plot using fviz_cluster function from the factoxtra package:

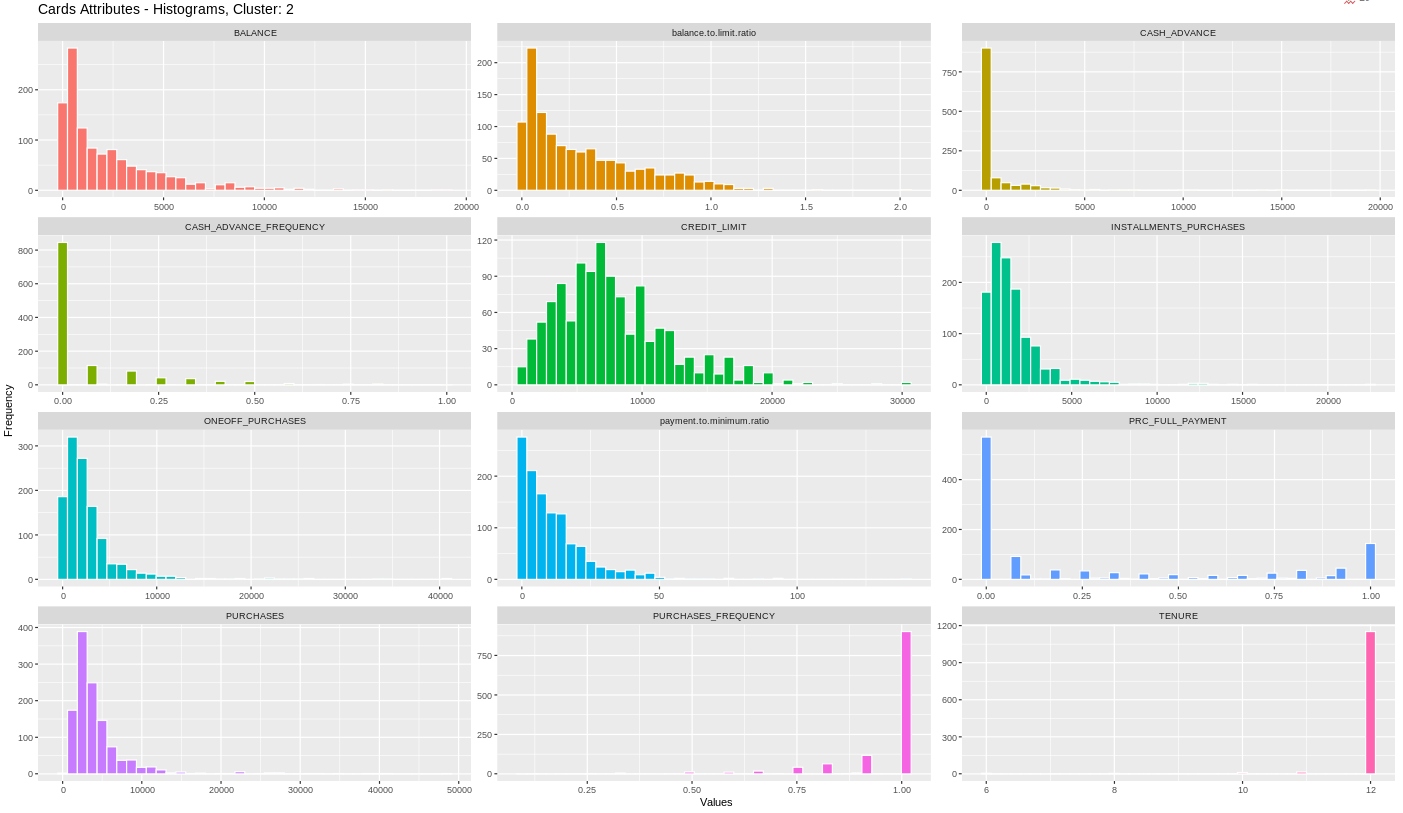

5. Clustering analysis. To analyze the clusters that we got, we added some new attributes to the dataset (e.g. balance-to-limit ratio to depict how much percentage of their limit the customers get on their balance). Then, we plotted the scatter plots of different attributes and found the means of each attribute grouped by cluster. To look at the data distribution within each of the clusters we plotted histograms of the most interesting attributes:

# run a block k-value times

for (i in 1:pca_cards_clust$iter)

{

# Print the plots for each cluster

print(cards[cards$cluster == i, chosen.attributes] %>%

# reshape data from wide format to long format

gather(Attributes, value, -"cluster",) %>%

# initialize plot object

ggplot(aes(x = value, fill = Attributes)) +

# visualize distribution of each attributes

geom_histogram(colour = "white", show.legend = FALSE, bins = 40) +

# create a 2-D layout

facet_wrap(~Attributes, scales = "free", ncol = 3) +

# add labels and title

labs(x = "Values", y = "Frequency",

title = paste("Cards Attributes - Histograms, Cluster:", i,

sep = " ")) + theme_grey())

}

After the analysis, we could identify three different groups of cardholders in the dataset:

- Those who hardly use the benefits of their credit cards. They do not seem to be an interesting target for the bank, though it could be worth a try to work on advertisements of the bank services for this group of people.

- Use their credit card a lot. These clients are the most loyal to the bank, they make a lot of purchases and have a good credit history. The bank must have a lot of benefits from them.

- These customers do not make any purchases - probably because their limit is already full, and even the balance is being transferred from earlier months. They do not really pay in time what they due to the bank. The bank must get much interest from these clients as they do not pay enough minimum payments.

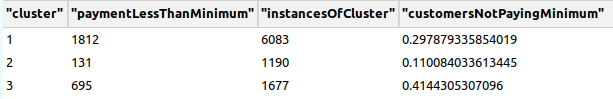

In addition, we calculated how many customers in each cluster do not pay the minimum for their balance. The results were shocking: over 40% of the card holders from the third cluster do not pay even the minimum they monthly due to the bank:

cards %>%

# grouping data for applying the operations to each cluster

group_by(cluster) %>%

# count customers who pay less than their minimum payment

summarize(paymentLessThanMinimum = sum(payment.to.minimum.ratio < 1),

# count instances in each cluster

instancesOfCluster = length(cluster)) %>%

# find the ratio of people not paying the minimum to all the people in the cluster

mutate(customersNotPayingMinimum = paymentLessThanMinimum/instancesOfCluster)

Conclusion

In conclusion, Hierarchical Clustering and K-means are both powerful algorithms for grouping similar data points of data. We have learned that unsupervised machine learning algorithms can successfully be applied to the different tasks of understanding real-world datasets.

K-means clustering can help identify patterns and similarities among credit card holders, such as common spending habits and payment patterns. The banks can also use this information to personalize their services in order to target certain groups of customers.

Hierarchical clustering analysis can provide valuable insights into customer data, and the resulting clusters can be used to inform marketing strategies.

By clustering it is essential to first analyze the considered data and, after the clustering has been performed, to be able to interpret the results with domain knowledge.